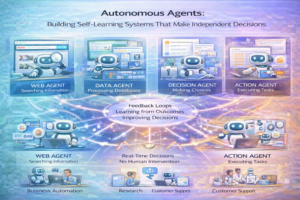

Autonomous Agents: Building Self-Learning AI Systems That Make Independent Decisions

Artificial intelligence has evolved from simple rule-based systems to sophisticated machine learning models that can recognize patterns and make predictions. However, the next frontier in AI development is autonomous agents—systems that can perceive their environment, learn from experience, take actions, and adapt their behavior over time, often with minimal human intervention. These autonomous agents represent a significant step toward more capable, flexible, and truly intelligent systems.

Autonomous agents are reshaping industries from robotics and autonomous vehicles to software automation and network management. This comprehensive guide explores what autonomous agents are, how they work, their development challenges, and their transformative potential across domains.

What Are Autonomous Agents?

Autonomous agents are computational entities capable of independent action within an environment. They perceive their surroundings through sensors or data inputs, process that information, make decisions, take actions, learn from the results, and adapt their future behavior accordingly. Unlike traditional software that follows predetermined instructions, autonomous agents can handle novel situations and improve their performance over time.

Key characteristics of autonomous agents include autonomy (making decisions without constant human guidance), reactivity (responding to environmental changes), proactiveness (taking initiative toward goals), and adaptability (learning and improving over time).

Types of Autonomous Agents

Reactive Agents: These agents respond directly to environmental stimuli without maintaining internal state. They’re simple, fast, and suitable for well-structured environments but limited in handling complex scenarios.

Deliberative Agents: These agents maintain internal models of the world, reason about situations, and plan actions. They’re more sophisticated than reactive agents but require more computational resources and better-defined world models.

Hybrid Agents: Combining reactive and deliberative components, hybrid agents respond quickly to urgent situations while also planning for long-term goals. Most practical autonomous systems use hybrid approaches.

Learning Agents: These agents improve their behavior through experience, using reinforcement learning or other learning mechanisms. Over time, they become more effective at their tasks.

Multi-Agent Systems: Multiple autonomous agents interact and coordinate with each other, solving complex problems through cooperation or competition.

Core Technologies Enabling Autonomous Agents

Reinforcement Learning: Agents learn to take actions that maximize cumulative reward over time. This approach has enabled breakthrough achievements in game-playing AI and robot control.

Planning and Decision Making: Techniques like A* search, Monte Carlo tree search, and dynamic programming help agents plan action sequences to achieve goals.

Perception and Sensing: Computer vision, sensor fusion, and natural language processing enable agents to understand their environment accurately.

Knowledge Representation: Ontologies, semantic networks, and knowledge graphs help agents maintain and reason about complex world models.

Communication and Coordination: In multi-agent systems, agents must negotiate, share information, and coordinate actions effectively.

Applications of Autonomous Agents

Robotics: Autonomous robots navigate physical environments, manipulate objects, and perform tasks with minimal human guidance. Applications range from manufacturing and logistics to exploration and rescue.

Autonomous Vehicles: Self-driving vehicles represent perhaps the most ambitious autonomous agent application, requiring sophisticated perception, planning, and decision-making in complex, safety-critical environments.

Network and System Management: Autonomous agents optimize network performance, detect and respond to security threats, and manage computing resources dynamically without human intervention.

Traffic Management: Autonomous agents can optimize traffic flow in smart cities by coordinating traffic light timing and managing vehicle routing.

Software Testing: Autonomous testing agents generate test cases, execute tests, and identify failures more efficiently than manual testing processes.

Game Playing: From Chess to Go to complex video games, autonomous agents have achieved superhuman performance, advancing our understanding of decision-making and strategic thinking.

Development Challenges

Safety and Verification: Ensuring autonomous agents behave safely and don’t cause harm in real-world environments is critical. Traditional software testing doesn’t adequately address the challenges of autonomous systems operating in unpredictable environments.

Interpretability: Understanding why an autonomous agent made a particular decision is challenging, especially with learning-based approaches. This opacity complicates debugging and trust-building.

Simulation-to-Reality Gap: Agents trained in simulation often perform poorly when deployed in real environments. Bridging this reality gap remains a significant challenge.

Scalability: Developing autonomous agents that work reliably across varying conditions and scales is more difficult than developing algorithms for fixed, controlled environments.

Ethical and Legal Issues: As autonomous agents make decisions that affect people, questions about accountability, fairness, and responsibility become increasingly important.

Best Practices for Autonomous Agent Development

Start with Simulation: Develop and test agents in simulation before deploying in real environments. Modern simulators can capture much of real-world complexity at lower cost and risk.

Implement Monitoring: Deploy comprehensive monitoring to track agent behavior in real environments. Alerts should notify humans when behavior becomes anomalous.

Use Hierarchical Approaches: Decompose complex problems into simpler subproblems solved by specialized agents, improving overall robustness.

Plan for Failure: Design systems assuming agents will sometimes fail. Implement graceful degradation and failsafe mechanisms.

Continuous Learning with Safeguards: While continuous learning enables adaptation, implement safeguards ensuring agents don’t learn inappropriate behaviors.

The Future of Autonomous Agents

Autonomous agents will increasingly handle complex real-world tasks across more domains. We’ll see better sim-to-real transfer enabling more efficient training, more explainable autonomous agents through advances in interpretability, and more sophisticated multi-agent coordination solving increasingly complex problems.

Conclusion

Autonomous agents represent the next evolution of artificial intelligence, moving beyond systems that respond to queries to systems that independently perceive, reason, act, and learn. While significant challenges remain in safety, interpretability, and reliability, the potential applications are vast. As these technologies mature, autonomous agents will transform industries and create new possibilities for automation and intelligence.

Continue Learning: Related Articles

Create a Resume Parser with NLP: Complete Python Tutorial for Extracting Structured Data

Introduction to AI Resume Parsing

Resume parsing is a fundamental task in HR technology, powering applicant tracking sy…

📖 20 min read

AI-Powered Sales Forecasting Software: ROI Analysis and Implementation Guide for B2B Companies

Sales forecasting has evolved from spreadsheet-based guesswork to precision AI-powered predictions that can increase rev…

📖 15 min read

Image Recognition with Convolutional Neural Networks

Related Learning PathsLearning Path: AI Tools MasteryLearning Path: Deep Learning Progression…

📖 1 min read

Structured Learning Paths for AI & ML

Structured Learning Paths for AI & ML

Complete progressions from beginner to advanced in different specializations

…

📖 2 min read

💡 Explore 80+ AI implementation guides on Harshith.org