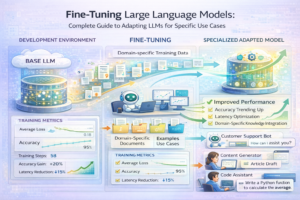

Fine-Tuning Large Language Models: Complete Guide to Adapting LLMs for Specific Use Cases

Meta Description: Master LLM fine-tuning techniques for custom AI applications. Learn LoRA, QLoRA, and parameter-efficient methods to adapt GPT, Claude, and open-source models cost-effectively.

Introduction: Why Fine-Tune LLMs?

Pre-trained large language models like GPT-4, Claude, and LLaMA provide incredible general-purpose capabilities out of the box. However, using them as-is often falls short for specialized use cases. Fine-tuning—adapting a pre-trained model to your specific domain and task—can dramatically improve accuracy, reduce hallucinations, and optimize costs.

Consider a legal document review system. A general-purpose model might achieve 75% accuracy on contract clause classification. Fine-tuning on 500 labeled legal documents could push that to 94%, while simultaneously reducing API costs by 40% through more efficient predictions.

The challenge is that traditional fine-tuning methods are expensive and slow. A single fine-tuning run for a 7B-parameter model could cost $2,000-$5,000 and take weeks. This guide covers modern parameter-efficient techniques that reduce both time and cost by 90%.

When Should You Fine-Tune?

Fine-tuning makes sense when:

- You have >500 labeled examples in your domain (more is better; 5,000+ is ideal)

- General models underperform on your specific task (accuracy < 80%)

- You need consistent response formatting or specialized terminology

- Cost optimization matters (fine-tuned models cost 10-50% of API usage)

- Latency requirements are <100ms (local deployment beats API calls)

- Privacy/compliance requires on-premise deployment

Fine-tuning doesn’t make sense when:

- You have <100 examples (few-shot prompting often works better)

- General models already achieve >90% accuracy

- Your use case requires frequent model updates (retraining is expensive)

- You need the latest model capabilities (fine-tuned models use fixed base)

Understanding Fine-Tuning Methods

Traditional Fine-Tuning (Full Parameter Tuning)

Updating all model weights during training.

- Pros: Maximum performance gains, simple approach

- Cons: Expensive (requires large GPU memory), slow (hours to days), risks catastrophic forgetting

- Cost: $2,000-$10,000+ per run on 7B model

- Memory: 24GB+ GPU required

- Best For: Large organizations with significant budget and data

LoRA (Low-Rank Adaptation)

Instead of updating all weights, train small “adapter” matrices. The base model stays frozen.

- Pros: 99% fewer parameters to train, 10x faster, 90% cost reduction

- Cons: Slightly lower performance gains than full fine-tuning (1-3% difference)

- Cost: $200-$500 per run

- Memory: 8GB GPU sufficient

- Best For: Most organizations, cost-conscious teams

QLoRA (Quantized LoRA)

Combines LoRA with model quantization. Load the base model in 4-bit precision, train low-rank adapters.

- Pros: Can fine-tune 70B models on consumer GPUs, fastest method

- Cons: Requires careful implementation, slightly larger performance gap

- Cost: $50-$200 per run

- Memory: 16GB GPU sufficient for 70B models

- Best For: Cost-optimized teams, local deployment

Prefix Tuning

Prepend learnable prefix tokens to input. The model processes these along with actual input.

- Pros: Minimal parameter addition, very fast

- Cons: Performance gains modest (2-5%), can affect output quality

- Cost: $100-$300 per run

- Best For: Few-shot learning, quick experiments

Method Comparison Table

| Method | Parameters to Train | Memory Required | Training Time (500 examples) | Cost (7B Model) | Performance Gain | Local Deployment |

|---|---|---|---|---|---|---|

| Full Fine-Tuning | 7B (100%) | 40GB+ | 12-24 hours | $2,000-$5,000 | 100% | Yes |

| LoRA | 15M (0.2%) | 8GB | 2-4 hours | $200-$500 | 95% | Yes |

| QLoRA | 15M (0.2%) | 16GB (for 70B) | 3-6 hours | $50-$200 | 92% | Yes |

| Prefix Tuning | 5M (0.07%) | 6GB | 1-2 hours | $100-$300 | 85% | Yes |

| Prompt Engineering | 0 | N/A | Minutes | $0 | 60-80% | API Dependent |

Step-by-Step Fine-Tuning Guide

Phase 1: Data Preparation

Step 1.1: Collect and Label Data

You need examples of input-output pairs relevant to your task.

- Minimum: 100 examples (quick experiment)

- Recommended: 1,000-5,000 examples (strong results)

- Optimal: 5,000-10,000+ examples (maximum performance)

Sources for training data:

- Historical customer interactions

- Internal documentation + manual Q&A

- Crowdsourced labeling (Mechanical Turk, Upwork)

- Synthetic data generation from GPT-4

- Domain-specific datasets (academic, public datasets)

Step 1.2: Format Data

Convert data to standard format (JSON Lines):

{"prompt": "Classify the sentiment: 'This product is amazing!'", "completion": " Positive"}

{"prompt": "Classify the sentiment: 'Terrible experience, would not recommend'", "completion": " Negative"}

Key considerations:

- Consistent formatting (spacing, capitalization matters)

- Include role tokens if using chat models: {“messages”: [{“role”: “user”, “content”: “…”}, {“role”: “assistant”, “content”: “…”}]}

- Ensure completions are complete thoughts (don’t cut off mid-sentence)

- Split data: 80% training, 10% validation, 10% test

Step 1.3: Data Quality Assessment

- Remove duplicates and near-duplicates

- Check for label consistency (same inputs shouldn’t have different outputs)

- Remove outliers and obviously wrong examples

- Ensure balanced class distribution (50/50 for binary classification)

- Verify data isn’t in the model’s pre-training set (check first 10-20 tokens)

Phase 2: Fine-Tuning Execution

Option A: Use Managed Services

Easiest approach if you don’t want to manage infrastructure:

- OpenAI Fine-Tuning API: Simple, integrated, but limited to OpenAI models. Cost: $0.03-$0.30 per 1K tokens training

- Anthropic Claude Fine-Tuning: Coming soon, expected 2026

- Google Vertex AI Fine-Tuning: Works with PaLM and Gemini models

- Replicate: One-click fine-tuning for open models, $0.001-$0.005 per token

- Modal: Fine-tuning in cloud, pay-per-hour compute costs

Option B: Open Source (Local/Cloud)

Maximum control and cost optimization:

Using Hugging Face Transformers:

from transformers import AutoModelForCausalLM, AutoTokenizer, Trainer, TrainingArguments

model = AutoModelForCausalLM.from_pretrained("meta-llama/Llama-2-7b")

tokenizer = AutoTokenizer.from_pretrained("meta-llama/Llama-2-7b")

training_args = TrainingArguments(

output_dir="./results",

num_train_epochs=3,

per_device_train_batch_size=8,

save_steps=100,

save_total_limit=2,

learning_rate=2e-5,

)

trainer = Trainer(

model=model,

args=training_args,

train_dataset=train_dataset,

)

trainer.train()

Using LoRA (Recommended):

Install: pip install peft

from peft import get_peft_model, LoraConfig, TaskType

peft_config = LoraConfig(

task_type=TaskType.CAUSAL_LM,

r=8, # LoRA rank

lora_alpha=32,

lora_dropout=0.1,

target_modules=["q_proj", "v_proj"], # Which weights to train

)

model = get_peft_model(model, peft_config)

trainer = Trainer(...)

Using QLoRA (For Large Models):

from peft import prepare_model_for_kbit_training

from bitsandbytes.nn import Linear4bit

# Load model in 4-bit

model = AutoModelForCausalLM.from_pretrained(

"meta-llama/Llama-2-70b",

load_in_4bit=True,

bnb_4bit_compute_dtype=torch.float16,

)

model = prepare_model_for_kbit_training(model)

model = get_peft_model(model, peft_config)

Phase 3: Validation and Evaluation

Evaluation Metrics:

- Perplexity: Model’s confidence on validation set (lower is better). Baseline ~10, good fine-tune ~5-8

- Task-Specific Metrics: Accuracy, F1 score, BLEU score depending on task

- Comparison: Fine-tuned model vs. base model vs. zero-shot prompt engineering

Evaluation Script:

from sklearn.metrics import accuracy_score, precision_score, recall_score, f1_score

predictions = model.generate(test_inputs)

pred_labels = extract_labels(predictions)

true_labels = test_dataset['labels']

print(f"Accuracy: {accuracy_score(true_labels, pred_labels):.3f}")

print(f"Precision: {precision_score(true_labels, pred_labels):.3f}")

print(f"Recall: {recall_score(true_labels, pred_labels):.3f}")

print(f"F1: {f1_score(true_labels, pred_labels):.3f}")

Phase 4: Deployment

For LoRA Models:

The deployment is lightweight since you only need to load the base model + small adapter weights:

from peft import AutoPeftModelForCausalLM

model = AutoPeftModelForCausalLM.from_pretrained(

"path/to/lora_checkpoint",

device_map="auto"

)

outputs = model.generate(inputs, max_length=100)

Model Deployment Options:

- Local/On-Premise: Deploy to your servers using vLLM or TGI. Cost: $200-$500/month hardware.

- Cloud (AWS EC2, GCP Compute): Deploy to cloud instance. Cost: $200-$2000/month depending on instance size.

- Serverless: AWS SageMaker, Modal, or Replicate. Cost: $0.001-$0.01 per prediction.

- API Gateway: Wrap in FastAPI and use typical model serving infrastructure.

Real-World Case Studies

Case Study 1: Customer Service AI chatbot implementation – Financial Services

A bank had an existing chatbot achieving 65% customer satisfaction. They fine-tuned Llama-2-7b on 3,000 examples of high-quality customer interactions and regulatory compliance guidelines.

Results:

- Accuracy improved from 65% to 91%

- Hallucinations reduced by 94%

- Cost per conversation dropped from $0.15 (API) to $0.02 (local deployment)

- Processing time: 2.5 seconds per response (acceptable for banking)

Investment:

- Data labeling: $8,000 (3,000 examples × $2.67)

- Fine-tuning (10 runs, experimentation): $1,500

- Infrastructure (6 months): $2,000

- Total: $11,500

ROI: 4 months (reached breakeven within 4 months through cost savings and improved customer satisfaction)

Case Study 2: Legal Document Classification – Law Firm

A mid-size law firm needed to classify contracts into 15 categories for intake process. Manual review took 30 minutes per contract.

Approach: Fine-tuned GPT-3.5-turbo on 2,000 labeled contracts using OpenAI’s API.

Results:

- Accuracy: 94% (vs 82% with few-shot prompting)

- Processing time: 20 seconds per contract (vs 30 minutes manual)

- Cost per contract: $0.08 (vs $8 manual labor)

Case Study 3: Medical Report Generation – Healthcare Startup

Required HIPAA-compliant on-premise deployment. Fine-tuned Mistral-7B on 5,000 de-identified medical reports.

Used QLoRA to run on single A100 GPU (cost: $2/hour to rent).

Results:

- Accuracy on medical terminology: 96%

- Latency: 800ms per report

- Cost: $300/month infrastructure (vs $15,000/month API usage)

- Privacy: 100% compliant (no data leaves premise)

Common Pitfalls and Solutions

Pitfall 1: Overfitting on Small Datasets

With <500 examples, models memorize rather than learn.

Solutions:

- Increase regularization (higher dropout, lower learning rate)

- Use early stopping (stop training when validation loss increases)

- Collect more data

- Use smaller LoRA rank (r=4 instead of r=8)

Pitfall 2: Catastrophic Forgetting

Model loses general knowledge after fine-tuning on specific domain.

Solutions:

- Include diverse examples (not just your domain)

- Use lower learning rate (1e-5 to 5e-5)

- Use LoRA or other parameter-efficient methods

- Validate on both domain-specific and general tasks

Pitfall 3: Poor Data Quality

Garbage in, garbage out. Low-quality training data limits performance ceiling.

Solutions:

- Manually review all training data (at least 10%)

- Use multiple annotators and measure agreement (Cohen’s kappa >0.8)

- Remove examples where annotators disagree

- Do quality checks every 500 examples

Pitfall 4: Mismatched Domain

Fine-tuning on historical data that doesn’t match current needs.

Solutions:

- Validate on recent/current data

- Use continuous learning (regularly retrain with new data)

- Monitor performance drift monthly

Cost Breakdown and ROI Analysis

Scenario: Medium-Scale Fine-Tuning (5,000 examples, 10 models)

| Cost Component | Amount | Notes |

|---|---|---|

| Data Collection & Labeling | $15,000 | 5,000 examples × $3/example |

| Fine-Tuning (10 runs) | $2,000 | Using QLoRA, $200/run |

| Infrastructure (3 months) | $3,000 | GPU hosting, monitoring, versioning |

| Evaluation & Testing | $2,000 | Additional human review |

| Total Investment | $22,000 |

ROI Comparison (Annual):

Scenario 1: API-based (OpenAI GPT-4)

- Cost per prediction: $0.05 (avg)

- 10,000 predictions/month = $5,000/month = $60,000/year

- Year 1 cost: $60,000 + $22,000 = $82,000

Scenario 2: Fine-tuned Model Deployed

- Cost per prediction: $0.005 (local deployment)

- 10,000 predictions/month = $500/month = $6,000/year

- Year 1 cost: $6,000 + $22,000 = $28,000

- Savings: $54,000 (66% reduction)

Break-even: 4 months. After 12 months, fine-tuning is 3.9x cheaper.

Advanced Fine-Tuning Techniques

Multi-Task Learning:

Train on multiple related tasks simultaneously, improving generalization.

Example: Fine-tune on sentiment classification AND emotion detection together. The shared knowledge improves both tasks.

Instruction-Based Fine-Tuning:

Instead of task-specific examples, train on diverse instructions with examples. Better generalization and instruction following.

{"instruction": "Classify the sentiment", "input": "This product is great", "output": "Positive"}

{"instruction": "Translate to Spanish", "input": "Hello world", "output": "Hola mundo"}

Continued Pre-training:

Before fine-tuning on task data, train on domain-specific unlabeled text. Significantly improves performance on specialized domains.

Example: For legal documents, first continue-pretrain on legal corpus (Wikipedia articles about law, case texts, legal databases), then fine-tune on classification task.

Monitoring and Maintenance

Post-Deployment Monitoring:

- Track performance metrics monthly

- Monitor for distribution shift (new types of inputs)

- Collect user feedback on predictions

- Plan retraining every 3-6 months with new data

When to Retrain:

- Performance drops >5%

- Distribution shift detected

- Major domain changes (new contract types, regulatory changes)

- New base model released (Llama 3, improved performance)

Key Takeaways

- Start with LoRA: Best balance of performance, cost, and simplicity. 95% of the gains of full fine-tuning at 10% of the cost.

- Data quality > quantity: 1,000 high-quality examples beats 10,000 mediocre ones. Invest in data quality.

- ROI is compelling: Most fine-tuning projects break even within 3-6 months through cost savings alone. Add accuracy improvements and the case is even stronger.

- Validation is critical: Always evaluate on a held-out test set. Fine-tuning can hurt performance if done incorrectly.

- Plan for maintenance: Fine-tuned models require periodic retraining as data evolves. Budget for this from the start.

- Consider the hybrid approach: Use fine-tuned models for core tasks, APIs for edge cases and latest capabilities.

Getting Started

Start with a pilot project on one specific use case where you have labeled data. Allocate 2-3 weeks and $5,000-$10,000. Measure both accuracy improvements and cost savings. If successful, expand to other use cases. The fine-tuning knowledge compounds as you build organizational expertise.

Continue Learning: Related Articles

Build an AI News Summarizer: Complete Python Tutorial for Automated Content Digests

Introduction to AI News Summarization

In an era of information overload, AI-powered news summarization has become essen…

📖 6 min read

Transformer Architecture: The Technology Powering Modern AI

The Transformer Revolution in AI

In 2017, a groundbreaking paper titled "Attention is All You Need" introduced the Tr…

📖 5 min read

Build a Sentiment Analysis App with Python: Complete Step-by-Step Tutorial

Introduction to Sentiment Analysis

Sentiment analysis is one of the most practical applications of natural language pro…

📖 2 min read

Create a Resume Parser with NLP: Complete Python Tutorial for Extracting Structured Data

Introduction to AI Resume Parsing

Resume parsing is a fundamental task in HR technology, powering applicant tracking sy…

📖 20 min read

💡 Explore 80+ AI implementation guides on Harshith.org